Open the M&S App

when in-store for the Scan, Pay, Go option to be available.

M&S is the go-to choice for customers seeking convenience food. However, long queues have been a deterrent, prompting M&S to explore technology to increase foot traffic, streamline operations, and enhance the in-store experience.

Like many large organizations, Marks & Spencer faced challenges in rapid innovation and product launches. They approached me to investigate how technology could improve the end-to-end customer experience (CX) in M&S Simply Food stores.

I worked hands-on as a CX researcher and UX designer, collaborating with a multidisciplinary team from both in-house and an integrated development agency.

Insights from a survey I conducted among M&S store managers and staff at the project’s outset revealed a critical issue: M&S customers would wait in line at the till for only six minutes before abandoning their shopping.

“When it comes to queuing, people use previous experiences to decide whether they will stay in the queue or leave it”

Interviewed M&S store staff shared their experiences, highlighting the need for a solution:

Lunchtime is manic; if there’s a queue, customers dump the basket and leave. We have to dispose all perishable refrigerated items as a result!

of interviewed customers said that long queues in-store would make them less likely to return.

I presented the insights report to the Senior Leadership team, and organized and moderated a series of design sprints involving teams from Brand Marketing, Product Design, Sales, Customer Service, and Engineering.

Working closely with the engineering team, I designed a working prototype for testing.

Through staff trials and three in-store trials, I continued to refine and improve the solution.

I conducted task-based observational studies in a real shop setting (M&S Edgeware Road).

Participants shopped using the Scan Pay Go prototype, evaluating the onboarding process, purchasing without bags or baskets, exiting the store with a “receipt” screen, basket management, and the overall experience of bypassing the tills.

During the session, participants wore a head-mounted eye tracker so that we could see how they were interacting with both the app, the store and the products.

It was amazing to see customers in action from their own eyes. The sessions provided insights on product packaging, shopping bags positioning, how/where to sign-post the app and our prototype, of course.

Insights from usability testing sessions informed rapid app design iterations, leading to a final MVP ready for launch. The pilot initially ran in six stores and later expanded to over 300 stores, resulting in a Mobile Pay Go transaction every 3 seconds during lunchtime.

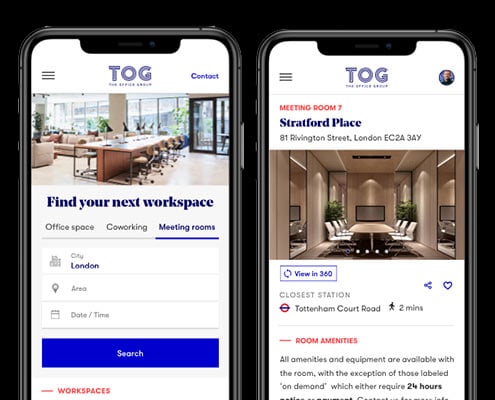

We developed an app that allows customers to quickly scan products, make immediate payments, and leave without queuing. This not only improved convenience but also facilitated quicker store navigation for customers.

The insights gathered from my research played a pivotal role in the creation of a promotional video for the Scan, Pay, GO service. This video was the result of a collaborative effort between my team and the Brand & Marketing team. You can now view an early concept of the video below.

M&S retail, operations and property director Sacha Berendji said:

“M&S is changing to become a digital first retailer with industry-leading store operations – and Mobile Pay Go is a really exciting part of that.”

Understanding how customers interacted with the store environment while using the prototype app was key for us to be able to improve it. For this reason I introduced a wearable and mobile eye tracking system called Tobii Pro Glassess.

Customers were asked to wear a pair of clear lens glasses and to carry on shopping as normal. The glasses in the meantime recorded graze-points and streamed the session live so we could follow every step of the action, either on the app or on the shelves.

The research moderator could follow the action on a tablet and discretely follow at distance the customer ready to step in, if needed. The rest of the team was conveniently positioned in another room where the testing session was streamed live on large HD screens.

This tool showed us exactly what customers were looking at in real time as they moved freely in store and helped us understand how they interacted with the physical space surrounding them and the prototype.

Due to the highly sensitive nature of the information in my research, my portfolio provides only a simplified overview of the insights and work involved. If you’d like to delve deeper into my work, discuss potential projects, or explore my experience further, please don’t hesitate to reach out!